Overview

Exoscale’s Scalable Kubernetes Service (SKS) is a fully managed Kubernetes offering designed to simplify the deployment, scaling, and management of containerized applications. SKS provides a production-ready control plane, seamless integration with Exoscale infrastructure, and supports the full Kubernetes ecosystem.

Terminology

- Cluster

- A virtual entity encapsulating a Kubernetes control plane and one or more nodepools.

- Control Plane

- The set of components that manage the lifecycle of a Kubernetes cluster, including the API server, scheduler, and controller manager.

- InstancePool

- A group of similar Compute instances whose lifecycle is managed by the scheduler, created upon a set of user-specified instance properties (such as size, template, security groups, et cetera).

- NodePool

- A group of compute instances managed by SKS, serving as worker nodes in your Kubernetes cluster.

- Node

- A compute instance within a NodePool that runs Kubernetes workloads.

Features

Scalable Kubernetes Service has an expansive feature set:

- Managed Control Plane

- SKS delivers a fully managed, highly available Kubernetes control plane, eliminating the need for manual setup and maintenance.

- Dynamic NodePools

- Easily scale your workloads by adding or removing NodePools, which are groups of compute instances managed by SKS.

- Full Cluster Lifecycle Management

- Create, upgrade, and delete clusters on demand. SKS supports automatic upgrades to the latest Kubernetes patch versions.

- Seamless Integration with Exoscale Services

- Leverage Exoscale’s Network Load Balancer, Block Storage via CSI driver, and other services directly within your SKS clusters.

- Customizable NodePools

- Use any Exoscale instance type within your NodePools, including CPU-optimized, memory-optimized, and GPU instances.

- High Availability (Pro Plan)

- The Pro Cluster Plan offers a resilient, highly available control plane to ensure your workloads remain operational and plus automatic scalability of control plane resources.

- CNCF Certified

- SKS is certified by the Cloud Native Computing Foundation, ensuring compatibility and support for standard Kubernetes APIs.

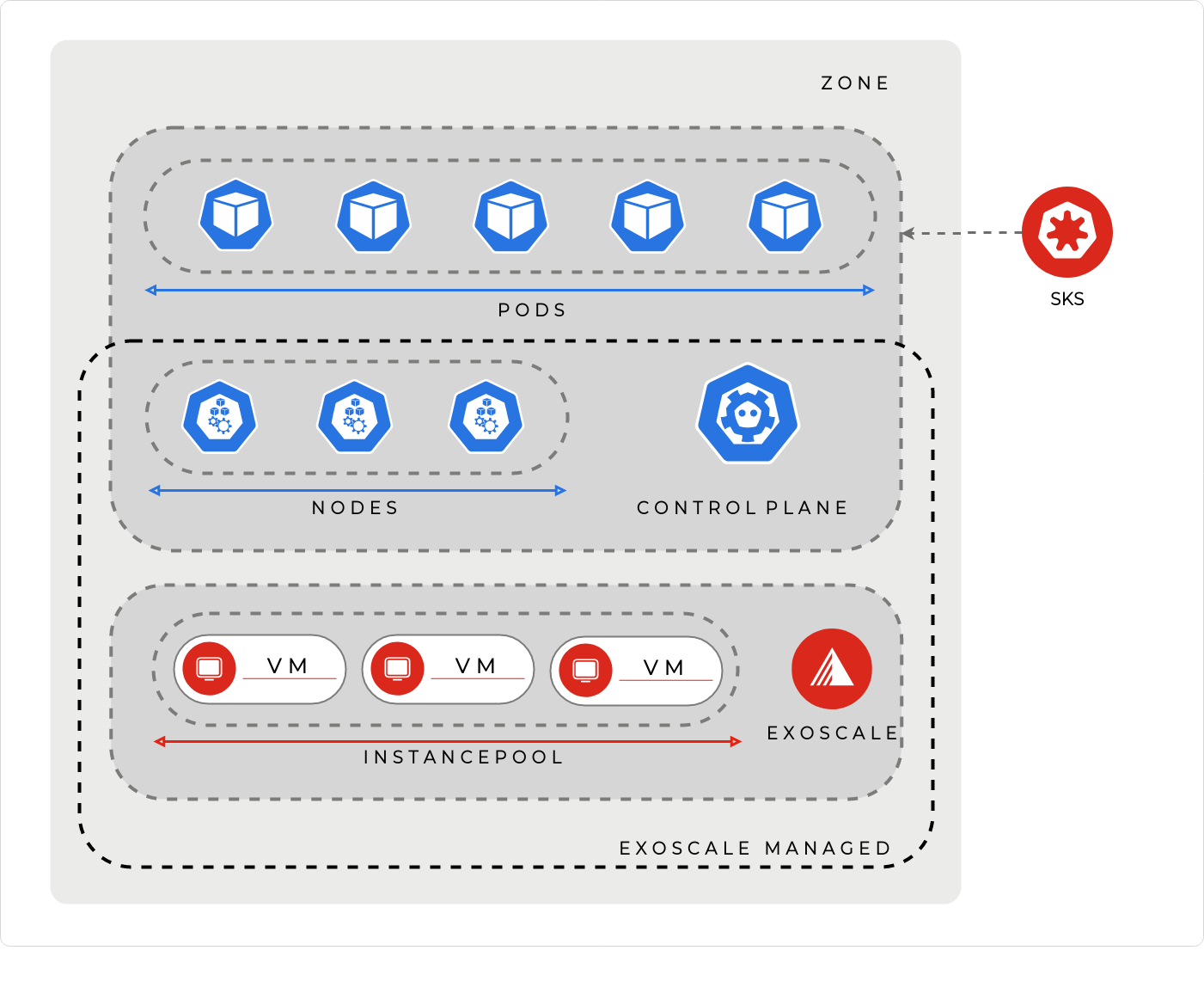

Architecture

Following is a breakdown of the overall SKS architecture.

Pricing Tiers

Exoscale offers SKS in two tiers: Starter and Pro, each tailored to different use cases

| Feature | STARTER | PRO |

|---|---|---|

| Usage | Development, testing, proof of concepts | Production workloads requiring high availability |

| API | Yes | Yes |

| CLI | Yes | Yes |

| Terraform Support | Yes | Yes |

| Control Plane Scaling | No | Yes |

| Auto-Upgrade | Opt-in | Opt-in |

| High Availability | No | Yes |

| Backup of etcd | No | Min. Daily |

| SLA | No | 99.95% |

| Price | Free | See Pricing |

Note

Starter clusters without attached NodePools will be deleted after 30 days of inactivity.

Control Plane

Exoscale runs the Kubernetes control plane for you—including the API Server, Controller Manager, Scheduler, and Konnectivity Server—ensuring high availability (in Pro plans), security, and minimal operational overhead. You don’t manage or access the control plane directly.

You deploy several components when you create a cluster. Some run on the Exoscale side, and some run on your cluster.

Control Plane Components (Exoscale-managed)

- The Kubernetes API Server.

- The Kubernetes Controller Manager.

- The Kubernetes Scheduler.

- The Konnectivity Server, which is used to provide a TCP level proxy for the control plane to cluster communication.

- The Exoscale Cloud Controller Manager also comes as a default.

In-Cluster Components (Deployed Automatically)

- Kube Proxy to forward the traffic to pods (using the

iptablesmode). - CoreDNS to manage the cluster DNS. The CoreDNS ConfigMap (named

corednsin thekube-systemnamespace) is never updated by Exoscale after the first deployment so you can override it if needed. - The Konnectivity agent is also deployed on the cluster.

- Calico is deployed by default to manage the cluster network. You can also choose to deploy a cluster without CNI plugins.

- Metrics server is also deployed by default. You can use it to gather pods and nodes metrics, and auto-scale your deployments.

Additional Features

Rapid cluster provisioning (≈2 minutes)

Automated or manual Kubernetes upgrades

Private networking and NLB support

CLI, API, Terraform, Pulumi, and web UI integration

OIDC integration for secure RBAC access

NodePools

A NodePool is a logical group of Kubernetes workers. When you create a NodePool, you specify:

- the characteristics for the workers (instance disk size, instance type, firewall or anti-affinity rules, …)

- the number of workers

The worker instances (or virtual machines) will be provisioned and will join the cluster automatically.

A cluster can have multiple NodePools, each with its own characteristics depending of what you need.

NodePools are backed by Instance Pools. When a NodePool is created, a new instance pool named nodepool-<nodepool-name>-<nodepool-id> is also created.

Note

You cannot interact with this Instance Pool directly. It will be managed completely by Exoscale depending on the NodePool state.

If you want to scale your NodePool, or evict machines from it, you need to target the NodePool, not the Instance Pool.

Important

A NodePool with an anti-affinity group can not contain more than 8 nodes.

Exoscale Cloud Controller Manager

The Exoscale Cloud Controller Manager performs several actions on the cluster:

- Validates nodes. When a node joins the cluster, the controller will enable it and approve the Kubelet server certificate signing request.

- Manages load balancers. The controller will automatically provision and manage Exoscale Network Load balancers based on Kubernetes services of the type

LoadBalancerfor a highly available load balancing setup for your cluster.

When the Exoscale Cloud Controller is enabled, an IAM key is automatically provisioned on your account. You can find more information about this Cloud Controller IAM key (and how to rotate it) in the SKS Certificates and Keys section.

Caution

If your SKS cluster is deployed with managed CCM enabled, you should not install it yourself. Otherwise your own CCM setup may conflict with the managed one and cause unexpected behavior.

Service Level and Support

Exoscale’s Scalable Kubernetes Service (SKS) offers a fully managed control plane with defined service levels and support scopes.

Control Plane SLA

In the Pro service tier, the following Kubernetes control plane components are covered by a 99.95% Service Level Agreement (SLA):

etcdkube-apiserverkube-schedulerkube-controller-manager- Exoscale Cloud Controller Manager (CCM)

This ensures high availability and reliability for the core components managing your cluster’s state and operations.

Node-Level SLA

While the control plane is managed by Exoscale, the worker nodes within your SKS cluster are your responsibility. Each node is subject to Exoscale’s standard compute SLA of 99.95%, ensuring consistent performance and uptime for your workloads.

In-Cluster Components

Exoscale deploys several essential components within your SKS clusters to facilitate networking, service discovery, and storage integration:

- Container Network Interface (CNI) plugins:

CalicoorCilium CoreDNSKonnectivitykube-proxy- Container Storage Interface (CSI) driver

These components are not covered by the SKS SLA due to the shared responsibility model. However, Exoscale provides best-effort support, including upgrade tools and operational guidance. In certain scenarios, support may request temporary access to your cluster’s kubeconfig to assist with troubleshooting.

Best Practices for Stability

To ensure optimal performance and supportability of your SKS cluster:

- Resource Management

- Set appropriate resource requests and limits for all workloads. This practice helps prevent resource contention and ensures fair scheduling across the cluster.

- Monitoring Node Health

- Regularly monitor the health status of your nodes. If nodes are reported as “NotReady” or if core components exhibit unexpected behavior, verifying resource configurations can aid in diagnosis.

Following these best practices helps maintain cluster stability and simplifies troubleshooting, enabling faster and more effective support when needed.